seahawkfreak":1iyatmwb said:

Popeyejones":1iyatmwb said:

As they have been, the Hawks are a very good but very undisciplined team, both on and off the field. It’s part of Carroll’s philosophy and has been discussed as nauseaum at this point.

Other contributing factors:

*Untalented Olineman get beat more which leads to more holding.

*Wilson’s improvisational style of play leads to more offensive holding (his line can’t see him, so when he reroutes into a direction outside the play design even the best lineman can get grabby (why there’s not more holding on designed rollouts, but it shows up more for everyone on broken plays or when QBs are escaping pressure laterally).

*A couple players who just get penalized a lot (e.g. Ifedi, Bennett on offsides).

*Garden variety cognitive bias, which affects fans of all teams.

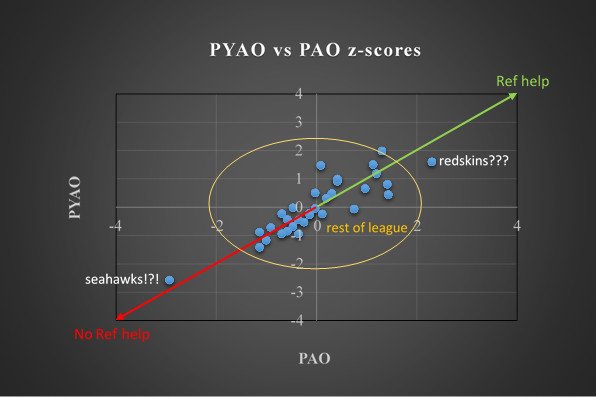

Remember though most of us aren't necessarily complaining about the penalties themselves but about the inconsistency of similar penalties being called on the opposition (PI calls, holding etc.). There has been statistical graphs and data to prove that when teams face Seattle their game average of penalties is always significantly lower.

Now arguments can be made about why this happens but this is the "bias" that we hate.

Let's focus on the bolded, as I think it's a good example of the type of cognitive biases which affect EVERY fanbase of EVERY team in EVERY team sport.

1) As a fan of the Hawks, you're going to look at the 2014 graph and see it as evidence of bias against your team, and leave it at that. As a fan what you're likely not gonna do is:

(a) take stock of the fact that these aren't even data for a full season, and are just data for 12 games in 2014 (i.e. 3/4s of one season), which makes the N even smaller (see 2-A below for why that really matters).

(a) take much stock or accord much weight to the fact that the Seahawks being middle of the pack for this for the full season of 2013 presents significant challenges to your bias hypothesis, or that anything is going on at all beside random variance in these first 12 games of 2014.

(b) Say, "hey, interesting, it looks like teams didn't get penalized against us across the first three quarters of 2014, but we were average in 2013, that makes me pretty curious to see the data from 2010-2012, the full year of 2014, and 2015-2016."

Again, these things aren't gonna escape you as red flags because you're dumb or a bad person, but simply because you suffer from the same cognitive biases and motivated reasoning that all fanbases for all teams across all sports suffer from. Because of those biases, even if you consider them you're gonna discount them and jump to "well, yeah, but..." rationalizations.

2) Regarding 2014, betaparticle, who wrote the post on Field Gulls, and practically everyone who reads the post on Field Gulls, are Hawks fans, and they're subjected to the same cognitive biases that every fanbase of ever team across every sport is affected by. Basically what I'm saying is that betaparticle likely engaged in this analysis because he's a Hawks fan and because he thought the Hawks were being treated unfairly. That's totally normal, but that he confirms what he believed to be true, and that those who also want to believe that there is referee bias aren't raising counter-hypotheses or significant problems with the data and what he's doing, should raise some suspicions for us.

To be clear, I don't think betaparticle is intentionally putting his finger on the scale or acting unethically, I think he's just entering into the analysis with the same cognitive biases that everyone else does. Here's what's going on in that post:

(A)

Deriving statistical significance: To measure probability and statistical significance for 3/4s of 2014 betaparticle treats his N (i.e. the number of cases) as 192, the number of games played (as N increases statistical significance also increases). That's an error, though, as (1) the observations are not independent and (2) his hypothesis is really about one team. For what he's interested in his N is actually 12 (3/4s of one season's worth of games).

Another way to think about this (and you can do this at home right now if you want) is let's say instead of 32 teams you have 32 regular dimes, and you're going to flip each dime 12 times to investigate if some of your dimes have a bias that lands on heads or tails more than other dimes (there's gonna be some random noise in this if you actually do it so the real thing to do would be to simulate this procedure 1,000 times).

What's gonna happen is you'll get a normal distribution across your dimes that will look like this:

https://tinyurl.com/ybu9w6fw

What you'll notice is that across your 32 dimes that have each been flipped twelve times you would EXPECT JUST BECAUSE OF RANDOM CHANCE AND YOUR SMALL N that in a normal distribution some of these dimes are going to appear to be statistically significantly different than the other dimes.

In fact, in this scenario, just based on random chance and probability alone, it would be WEIRD if some of these dimes didn't appear to have magic heads-landing and tails-landing properties even if they don't.

Now, of course, if we flipped each of our 32 dimes 10,000 times each we'd know there's nothing special about our dimes, but this is the problem with small Ns -- it's exactly the problem that is tearing the entire academic discipline of Social Psychology apart right now.

(B)

Making a small N even smaller: Let's not forget that the data have been collected for 2013 and the first 3/4s of 2014, but the graph only shows the first 3/4s of 2014. If you can increase you N and have more data available to you, why wouldn't you use it? You wouldn't use it because maybe you looked at the raw numbers for 2013 and saw instantly that it would weaken your case, so you leave it out. (Again, not saying betaparticle is a bad person -- not slipping into doing stuff like this is a challenge even for professional academics such as myself).

(C)

Publication Bias If betaparticle didn't find anything of note in his analysis, he probably wouldn't have written up a post about it, and if he did, Field Gulls isn't in the business of publishing non-news. This is our dimes experiment all over again, except now it involves the hundred of little choices that go into an analysis like this (2A is an example of one of these little choices). So suppose you have a 10% chance of having your randomized little choices lead to a statistically significant false positive (like betaparticle's), and 10 fans across each of 32 fan boards doing the same thing for a total of 320 people asking the same question.

On each fan board one person gets a false positive, and nine people don't, so as a first cut 288 people correctly find that nothing special is going on and they don't write a blog post about it.

You now have 32 people left who are looking at their false positive not realizing it was a false positive, and deciding if it's worth writing a blog post, with the blog editor deciding if that post (if written) is worth publishing. 30 of 32 just abandon the project along the way, because the finding is that their team is statistically average, which isn't news for a blog about their team. Then you have the one person who wrongly found that their team is being "privileged" by this, and that's not really a story any fanbase likes to hear or tell itself, so that is likely gonna get canned too.

In the end though, because of publication bias, from 320 potential blog posts you're down to just one, and it's the one that confirms the narrative that EVERY FANBASE, of EVERY TEAM, in EVERY SPORT just loooooves to tell itself:

Outside forces are holding my favorite team back. I've always known it, and here's the data to finally prove it!

:2thumbs: